Cryptanalysis

|

|

Cryptanalysis (from the Greek kryptós, "hidden", and analýein, "to loosen" or "to untie") is the study of methods for obtaining the meaning of encrypted information without access to the secret information which is normally required to do so. Typically, this involves finding the secret key. In non-technical language, this is the practice of codebreaking or cracking the code, although these phrases also have a specialised technical meaning (see code).

"Cryptanalysis" is also used to refer to any attempt to circumvent the security of other types of cryptographic algorithms and protocols in general, and not just encryption. However, cryptanalysis usually excludes attacks that do not primarily target weaknesses in the actual cryptography; methods such as bribery, physical coercion, burglary, keylogging, and so forth, although these latter types of attack are an important concern in computer security, and are increasingly becoming more effective than traditional cryptanalysis.

Even though the goal has been the same, the methods and techniques of cryptanalysis have changed drastically through the history of cryptography, adapting to increasing cryptographic complexity, ranging from the pen-and-paper methods of the past, through machines like Enigma in World War II, to the computer-based schemes of the present. The results of cryptanalysis have also changed — it is no longer possible to have unlimited success in codebreaking, and there is a hierarchical classification of what constitutes a rare practical attack. In the mid-1970s, a new class of cryptography was introduced: asymmetric cryptography. Methods for breaking these cryptosystems are typically radically different from before, and usually involve solving a carefully-constructed problem in pure mathematics, the most well-known being integer factorization.

| Contents |

History of cryptanalysis

- Main article: History of cryptography.

Cryptanalysis has coevolved together with cryptography, and the contest can be traced through the history of cryptography — new ciphers being designed to replace old broken designs, and new cryptanalytic techniques invented to crack the improved schemes. In practice, they are viewed as two sides of the same coin: in order to create secure cryptography, you have to design against possible cryptanalysis.

Classical cryptanalysis

Although the actual word "cryptanalysis" is relatively recent (it was coined by William Friedman in 1920), methods for breaking codes and ciphers are much older. The first known recorded explanation of cryptanalysis was given by 9th century Arabic polymath Abu Yusuf Yaqub ibn Ishaq al-Sabbah Al-Kindi in A Manuscript on Deciphering Cryptographic Messages. This treatise includes a description of the method of frequency analysis (Ibraham, 1992).

Frequency analysis is the basic tool for breaking classical ciphers. In natural languages, certain letters of the alphabet appear more frequently than others; in English, "E" is likely to be the most common letter in any given sample of text. Similarly, the digraph "TH" is the most likely pair of letters, and so on. Frequency analysis relies on a cipher failing to hide these statistics. For example, in a simple substitution cipher (where each letter is simply replaced with another), the most frequent letter in the ciphertext would be a likely candidate for "E".

Frequency analysis relies as much on linguistic knowledge as it does on statistics, but as ciphers became more complex, mathematics gradually became the predominant approach to cryptanalysis. This change was particularly evident during World War II, where efforts to crack Axis ciphers required new levels of mathematical sophistication. Moreover, automation was for the first time applied to cryptanalysis with the Bomba device and the Colossus — one of the earliest computers.

Modern cryptanalysis

Even though computation was used to great effect in cryptanalysis in World War II, it also made possible new methods of cryptography orders of magnitude more complex than ever before. Taken as a whole, modern cryptography has become much more impervious to cryptanalysis than the pen-and-paper systems of the past, and now seems to have the upper hand against pure cryptanalysis. The historian David Kahn notes, "Many are the cryptosystems offered by the hundreds of commercial vendors today that cannot be broken by any known methods of cryptanalysis. Indeed, in such systems even a chosen plaintext attack, in which a selected plaintext is matched against its ciphertext, cannot yield the key that unlock other messages. In a sense, then, cryptanalysis is dead. But that is not the end of the story. Cryptanalysis may be dead, but there is - to mix my metaphors - more than one way to skin a cat." (Remarks on the 50th Anniversary of the National Security Agency, 1 November, 2002). Kahn goes on to mention increased opportunities for interception, bugging, side channel attacks and quantum computers as replacements for the traditional means of cryptanalysis [1] (http://www.fas.org/irp/eprint/kahn.html).

Kahn may have been premature in his cryptanalysis postmortem; weak ciphers are not yet extinct. In academia, new designs are regularly presented, and are also frequently broken: the 1984 block cipher Madryga was found to be susceptible to ciphertext-only attacks in 1998; FEAL-4, proposed as a replacement for the DES standard encryption algorithm, was demolished by a spate of attacks from the academic community, many of which are entirely practical. In industry, too, ciphers are not free from flaws: for example, the A5/1, A5/2 and CMEA algorithms, used in mobile phone technology, can all be broken in hours, minutes or even in real-time using widely-available computing equipment. In 2001, Wired Equivalent Privacy (WEP), a protocol used to secure Wi-Fi wireless networks, was shown to be susceptible to a practical related-key attack.

The results of cryptanalysis

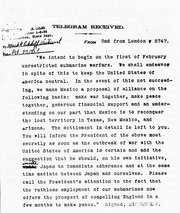

Successful cryptanalysis has undoubtably influenced history; the ability to read the presumed-secret thoughts and plans of others can be a decisive advantage, and never more so than during wartime. For example, in World War I, the breaking of the Zimmermann telegram was instrumental in bringing the United States into the war. In World War II, the cryptanalysis of the German ciphers — including the Enigma machine and the Lorenz cipher — has been credited with everything between shortening the end of the European war by a few months to determining the eventual result (see ULTRA). The United States also benefited from the cryptanalysis of the Japanese PURPLE code (see MAGIC).

Governments have long recognised the potential benefits of cryptanalysis for intelligence, both military and diplomatic, and established dedicated organisations devoted to breaking the codes and ciphers of other nations, for example, GCHQ and the NSA, organisations which are still very active today. Even as of 2004, it was reported that the United States had broken Iranian ciphers. (It is unknown, however, whether this was pure cryptanalysis, or whether other factors were involved: [2] (http://news.bbc.co.uk/1/hi/technology/3804895.stm)).

Characterising attacks

Cryptanalytic attacks vary in potency and how much of a threat they pose to real-world cryptosystems. A certificational weakness is a theoretical attack that is unlikely to be applicable in any real-world situation; the majority of results found in modern cryptanalytic research are of this type. Essentially, the practical importance of an attack is dependent on the answers to the following three questions:

- What knowledge and capabilities are needed as a prerequisite?

- How much additional secret information is deduced?

- How much effort is required? (What is the computational complexity?)

Prior knowledge: scenarios for cryptanalysis

Cryptanalysis can be performed under a number of assumptions about how much can be observed or found out about the system under attack. As a basic starting point it is normally assumed that, for the purposes of analysis, the general algorithm is known; this is Shannon's Maxim of "the enemy knows the system". This is a reasonable assumption in practice — throughout history, there are countless examples of secret algorithms falling into wider knowledge, variously through espionage, betrayal and reverse engineering. (On occasion, ciphers have been reconstructed through pure deduction; for example, the German Lorenz cipher and the Japanese Purple code, and a variety of classical schemes).

Other assumptions include:

- Ciphertext-only: the cryptanalyst has access only to a collection of ciphertexts or codetexts.

- Known-plaintext: the attacker has a set of ciphertexts to which he knows the corresponding plaintext.

- Chosen-plaintext (chosen-ciphertext): the attacker can obtain the ciphertexts (plaintexts) corresponding to an arbitrary set of plaintexts (ciphertexts) of his own choosing.

- Adaptive chosen-plaintext: like a chosen-plaintext attack, except the attacker can choose subsequent plaintexts based on information learned from previous encryptions. Similarly Adaptive chosen ciphertext attack.

- Related-key attack: Like a chosen-plaintext attack, except the attacker can obtain ciphertexts encrypted under two different keys. The keys are unknown, but the relationship between them is known; for example, two keys that differ in the one bit.

These types of attack clearly differ in how plausible they would be to mount in practice. Although some are more likely than others, cryptographers will often take a conservative approach to security and assume the worst-case when designing algorithms, reasoning that if a scheme is secure even against unrealistic threats, then it should also resist real-world cryptanalysis as well.

The assumptions are often more realistic than they might seem upon first glance. For a known-plaintext attack, the cryptanalyst might well know or be able to guess at a likely part of the plaintext, such as an encrypted letter beginning with "Dear Sir", or a computer session starting with "LOGIN:". A chosen-plaintext attack is less likely, but it is sometimes plausible: for example, you could convince someone to forward a message you have given them, but in encrypted form. Related-key attacks are mostly theoretical, although they can be realistic in certain situations, for example, when constructing cryptographic hash functions using a block cipher.

Classifying success in cryptanalysis

The results of cryptanalysis can also vary in usefulness. For example, cryptographer Lars Knudsen (1998) classified various types of attack on block ciphers according to the amount and quality of secret information that was discovered:

- Total break — the attacker deduces the secret key.

- Global deduction — the attacker discovers a functionally equivalent algorithm for encryption and decryption, but without learning the key.

- Instance (local) deduction — the attacker discovers additional plaintexts (or ciphertexts) not previously known.

- Information deduction — the attacker gains some Shannon information about plaintexts (or ciphertexts) not previously known.

- Distinguishing algorithm — the attacker can distinguish the cipher from a random permutation.

Similar considerations apply to attacks on other types of cryptographic algorithm.

Complexity

Attacks can also be characterised by the amount of resources they require. This can be in the form of:

- Time — the number of "primitive operations" which must be performed. This is quite loose; primitive operations could be basic computer instructions, such as addition, XOR, shift, and so forth, or entire encryption methods.

- Memory — the amount of storage required to perform the attack.

- Data — the quantity of plaintexts and ciphertexts required.

In academic cryptography, a weakness or a break in a scheme is usually defined quite conservatively. Bruce Schneier sums up this approach: "Breaking a cipher simply means finding a weakness in the cipher that can be exploited with a complexity less than brute force. Never mind that brute-force might require 2128 encryptions; an attack requiring 2110 encryptions would be considered a break...simply put, a break can just be a certificational weakness: evidence that the cipher does not perform as advertised." (Schneier, 2000).

Cryptanalysis of asymmetric cryptography

Asymmetric cryptography (or public key cryptography) is cryptography that relies on using two keys; one private, and one public. Such ciphers invariably rely on "hard" mathematical problems as the basis of their security, so an obvious point of attack is to develop methods for solving the problem. The security of two-key cryptography depends on mathematical questions in a way that single-key cryptography generally does not, and conversely links cryptanalysis to wider mathematical research in a new way.

Asymmetric schemes are designed around the (conjectured) difficulty of solving various mathematical problems. If an improved algorithm can be found to solve the problem, then the system is weakened. For example, the security of the Diffie-Hellman key exchange scheme depends on the difficulty of calculating the discrete logarithm. In 1983, Don Coppersmith found a computationally feasible way to find discrete logarithms, and thereby gave to the cryptanalyst a tool with which to break the Diffie-Hellman cryptosystems. Another scheme, the popular RSA algorithm, remains unbroken. Its security depends (in part) upon the difficulty of integer factorisation — a breakthrough in factoring would impact the security of RSA.

In 1980, one could factor a difficult 50-digit number at an expense of 1012 elementary computer operations. By 1984 the state of the art in factoring algorithms had advanced to a point where a 75-digit number could be factored in 1012 operations. Advances in computing technology also meant that the operations could be performed much faster, too. Moore's law predicts that computer speeds will continue to increase. Factoring techniques may continue do so as well, but will most likely depend on mathematical insight and creativity, neither of which has ever been successfully predictable. 150-digit numbers of the kind once used in RSA have been factored. The effort was greater than above, but was not unreasonable on fast modern computers. By the start of the 21st century, 150-digit numbers were no longer considered a large enough key size for RSA. Numbers with several hundred digits are still considered too hard to factor in 2005, though methods will probably continue to improve over time, requiring key size to keep pace or new algorithms to be used.

Another distinguishing feature of asymmetric schemes is that, unlike attacks on symmetric cryptosystems, any cryptanalysis has the opportunity to make use of knowledge gained from the public key.

Quantum computing applications for cryptanalysis

Quantum computers have potential for use in cryptanalysis. Because quantum states can exist in superposition (ie, entangled), a new paradigm for computation is possible. Peter Shor of Bell Labs proved the possibility, and various teams have demonstrated one or another aspect of quantum computer engineering in the years since. Thus far, only very limited proof of possibility designs have been demonstrated. There is, at this writing, no credible prospect of an actual, usable, quantum computer.

However, were a quantum computer to be built, many things would change. Parallel computation would likely become the norm, and some aspects of cryptography would change.

In particular, since a quantum computer would be able to conduct extremely fast brute force key searches, key lengths now considered effectively beyond any brute force attacker's resources would become practical. Key lengths necessary to be beyond a quantum computer's capacity would be longer, probably considerably longer. While some popular writers have declared that no encryption would remain secure when quantum computers become available, it seems likely that simply adding bits to key lengths will prohibit brute force attacks, even with quantum computers.

A second possibility is that increased computational power may make possible non brute force key search attacks against one or more currently impregnable algorithms, or classes of algorithms. For instance, not all progress in prime factorisation has been due to algorithmic improvements. Some has been due to increasing computer power, and the existence of working quantum computers may considerably advance factorization thus increasing the vulnerability of some cryptographic algorithms. This much is perhaps foreseeable, if not clearly. What cannot be anticipated is a theoretical breakthrough, requiring quantum computing, which would make possible currently impractical (or even unknown) attacks. In the absence of a method for predicting breakthroughs, we will simply have to wait.

It is unknown whether there is a polynomial time encryption algorithm for which decryption requires exponential time, even for a quantum computer.

Methods of cryptanalysis

Classical cryptanalysis:

Symmetric algorithms:

- Differential cryptanalysis

- Linear cryptanalysis

- Integral cryptanalysis

- Statistical cryptanalysis

- Mod-n cryptanalysis

- XSL attack

- Slide attack

Other methods:

- Birthday attack

- Man in the middle attack

- Brute force attack

- Gardening (cryptanalysis)

- Differential Power Analysis

See also

- Rubber-hose cryptanalysis

- Side-channel attack

- Decipherment

- Cryptography

- Cryptography portal

- Topics in Cryptography

External links

- Basic Cryptanalysis (http://www.umich.edu/~umich/fm-34-40-2/)

References

- Helen Fouché Gaines, "Cryptanalysis", 1939, Dover. ISBN 0486200973

- Ibraham A. “Al-Kindi: The origins of cryptology: The Arab contributions”, Cryptologia, 16(2) (April 1992) pp. 97–126.

- David Kahn, "The Codebreakers - The Story of Secret Writing", 1967. ISBN 0684831309

- Lars R. Knudsen: Contemporary Block Ciphers. Lectures on Data Security 1998: 105-126

- Bruce Schneier, "Self-Study Course in Block Cipher Cryptanalysis (http://www.schneier.com/paper-self-study.html)", Cryptologia, 24(1) (January 2000), pp. 18–34.

da:Kryptoanalyse de:Kryptoanalyse fr:Cryptanalyse nl:Crypto-analyse pl:Kryptoanaliza pt:Cripto-análise